Measuring AI Performance: Are You Leveraging LLMs’ Analytic Capabilities?

Understanding how your artificial intelligence (AI) is performing requires effective analytics tools, a strong grasp of conversational design practices, and a clear sense of your business goals and your customers’ intents.

By Mike Myer, CEO and Founder, Quiq

While a lot has shifted in the automation landscape with the widespread adoption of ChatGPT and other Large Language Models (LLMs), one thing hasn’t changed. Whether you’re building a Natural Language Understanding (NLU) bot or an LLM-powered AI Assistant, you need to understand how your AI is performing.

Anyone who has spent a cursory amount of time attempting to build, deploy, measure, and optimize an automated AI Assistant knows that not all resolved conversations are created equal.

Did the customer leave because they got their issue resolved? Or did they give up because your bot was giving them the same unhelpful answer over and over?

While it might seem impossible to sift through mountains of conversational data, there are a handful of analytics tools and conversational design best practices that can go a long way to ensuring you’re measuring—and optimizing—what matters.

Let’s dive into what those tools and best practices are, starting with techniques to measure customer satisfaction (CSAT).

How to Measure CSAT and Collect Customer Feedback

Analyzing CSAT scores helps you gauge your conversational AI’s success and identify improvement areas— surveys and thumbs-up feedback mechanisms are effective techniques for measuring customer satisfaction.

Integrating short surveys at the end of conversations or providing a thumbs-up option during conversations is powerful. It allows customers to express their level of satisfaction easily and directly within the context where they are already engaged. And because you can do it conversationally, you can garner better response rates and more immediate feedback.

We’ll cover a wide range of measurement techniques below, but it’s important to not overlook the simplest technique when trying to understand if an AI Assistant answered the customer’s question: Ask them.

- Use multiple formats. For instance, CSAT, free form, 1-5 stars, was this helpful yes/no, thumbs up/thumbs down).

- Collect channel-appropriate feedback. Is your primary channel short-lived like web chat, where the customer may close their browser tab and disappear, or is it a more persistent channel, like Short Message Service (SMS), where the conversation is more asynchronous in nature? Your timing should be partially dictated by the channel of engagement.

- Have a clear conversation architecture strategy in place from the beginning, so you can collect feedback at critical moments. Whether that’s after an FAQ answer is served or a conversation has been closed, it is going to be vital to segment this feedback by customer paths or goals.

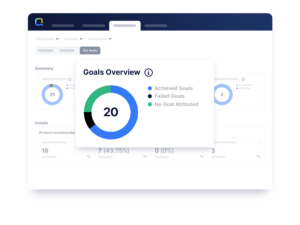

What Are Goals and How Can You Define Them?

Setting clear goals for AI performance measurement is crucial for tracking progress and evaluating success.

While it’s great when customers tell you exactly what they liked or didn’t like about their experience, it’s all but guaranteed that you’re going to get less than a 100% satisfaction rate on your customer satisfaction surveys.

This could be because your AI Assistant delivered the appropriate message, but a customer didn’t like its intent, which may be company policy. Or perhaps it correctly identified a product the customer is looking for, but that product is out of stock, and the customer is still unhappy.

On the other hand, many users never even reach a point with your AI Assistant to trigger a survey.

That’s why it’s important to be able to use different points in a customer’s journey as a means to silently measure the efficacy of your experience—whether it’s having your AI Assistant ask a certain question, collect a piece of data, or send a particular response.

Ideally, you should be able to measure and report on key milestones in the customer journey to understand how many customers engaged with a particular part of your experience, and how many had a successful, or unsuccessful outcome.

For example, if you’ve deployed a generative AI Assistant designed to help customers track and reschedule furniture deliveries, you’d like to know how many customers attempted to track their order, how many successfully rescheduled their order, and how many never successfully entered their order details and got escalated to a live agent.

While the above might seem straightforward, at Quiq we’ve learned there is a non-trivial amount of conversational design work that goes into determining what possible outcomes constitute success or failure— not to mention how to think about what it means for a user to “attempt” something.

The tooling you’re using should be flexible enough to allow you to cover the wide range of ways you may need to track your conversations. While channel surveys add massive value, companies should also send out Customer Effort Score (CES) surveys several days after the interaction to capture the CES across all channels in the service journey.

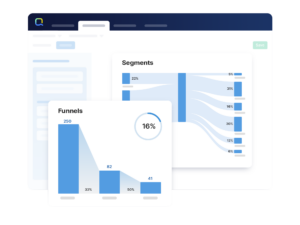

How to Find Where Customers Are Giving Up

Your AI Assistant most likely has several jobs it’s designed to accomplish, whether that’s providing a product recommendation or helping rebook a customer’s flight.

But don’t focus exclusively on analyzing these user journeys at the expense of having a high-level understanding of how users are engaging with your AI Assistant—and what’s changing over time.

A good set of conversational AI tools should enable you to get a bird’s eye view of your experience and allow you to dive into the weeds, ideally at the individual conversation level, to understand exactly what’s going on in your experience and how customer usage and needs evolve over time.

While there are a seemingly limitless number of ways to think about analyzing your AI Assistant, there are a handful of ways that are worth considering.

Traffic

Start by analyzing traffic changes. Are you noticing more customers going down a particular path or less? Can you dig into groups of conversations at these impacted points and understand what’s changed?

Customer Intent

Often related to traffic, you can identify shifting customer intent by looking to see if customers are asking different questions than they were previously. Is your AI Assistant handling this shift well, or do you need to update the AI Assistant?

Dropoff

Is the completion rate lower at certain key points in your experience? Can you visualize this at a glance, with a set of funnels, or a Sankey diagram?

Sentiment

Is there a change in customer mood? Whether that’s during the conversation, or due to key facets of your experience, this is an important source of performance knowledge.

Regardless of where you look to find where customers are dropping off, it’s important to have a powerful set of analytics tools that let you visualize, measure, and drill into conversations in a manner appropriate to your team’s workflow.

What’s Different About LLMs?

While much of the above still applies to analyzing AI Assistants that are powered by LLMs, this emerging AI tech presents unique measurement opportunities.

For one, LLMs do a great job of reading and understanding. You can harness this power by developing evaluation and scoring techniques via prompting that will allow you to measure the effectiveness and helpfulness of your AI Assistant.

What if you could measure CSAT without needing to ask for it? Or what if you could identify topics over time where gaps in the knowledge base exist so that you could add to them?

These types of things are possible with the right prompting and context fed into an LLM. Here are other ways to make performance measurement easier with LLM-powered AI.

Have a Large Language Model Review LLM Conversations

Not only can LLMs power the conversation, but they can also analyze it both throughout the experience and upon its conclusion. You can use LLMs to help measure and evaluate the performance of AI Assistants. For example, what was the user’s sentiment in the conversation and how did it change from beginning to end?

Measure Sentiment Shifts

Rather than simply measuring overall sentiment, layering in sentiment shift allows you to see a more nuanced and complete picture of the AI Assistant’s conversation from the customer’s baseline to the conversation’s completion.

You can also use sentiment analysis at specific points in time to guide the users directly to a live agent if the sentiment score reaches particular levels.

Summarize Conversations

Transcripts can become long and tedious to read through. Instead, use an LLM to read and summarize a transcript before handing it off to an agent. Or simply use it to summarize a conversation for record keeping. Auto summarization saves time for agents and analysts alike.

How to Deploy and Manage Changes Safely

When you’re building an AI Assistant you need to not only consider how your answers will appear, but how they appear and change over time with new phrases people might use or updated policies and knowledge base articles.

Use Test Sets to Monitor and Improve AI Assistant Responses

Here’s a scenario: Your knowledge base article addresses how to accomplish something within your app. But then you add functionality and a corresponding knowledge base article.

You can test it out with new user questions to see how it answers, but you’re also going to want to know that the things people were asking before this change are still being answered correctly and that any overlap or contention between articles is still handled well in your generated responses.

This is where test sets come in.

You can run a set of tests where you know the appropriate answer both before and after adding this new article. Make sure the responses continue to look strong even after adding in new knowledge base information.

And the best part? You can use an LLM to automate this process.

Tell the LLM the types of questions users ask, track the responses your AI Assistant gives, measure those responses, and set baselines, then run them again after the new article is added.

Do your new responses continue to track well against the metrics? Awesome! Now you have more data for customers to interact with, you know you can answer additional questions, and you can be confident that the answers generated continue to be effective and accurate.

In addition to testing, you want to be able to monitor events as they happen over time within conversations, so you can pull out user phrases and see how customers’ behavior and language might change over time.

By recording events in your AI Assistant conversations, you can add these to test sets, build out test cases for them, and generally strengthen the robustness of your AI Assistant’s responses.

Final Thoughts

Understanding how your AI is performing requires effective analytics tools, a strong grasp of conversational design practices, and a clear sense of your business goals and your customers’ intents.

But LLMs now present additional measurement opportunities—from leveraging them to analyze conversations and evaluate performance, to measuring sentiment shifts and automating tasks like conversation summarization.

By continuously monitoring events and recording user behavior within and across conversations, you can strengthen your AI Assistant’s responses and improve its overall performance.

CEO and Founder, Quiq

Mike is the Founder and CEO of Quiq. Before founding Quiq, Mike was Chief Product Officer & VP of Engineering at Dataminr, a startup that analyzes all of the world’s tweets in real-time and detects breaking information ahead of any other source. Mike has deep expertise in customer service software, having previously built the RightNow Customer Experience solution used by many of the world’s largest consumer brands to deliver exceptional interactions.

RightNow went public in 2004 and was acquired by Oracle for $1.5B in 2011. Mike led Engineering the entire time RightNow was a standalone company and later managed a team of nearly 500 at Oracle responsible for Service Cloud. Before RightNow, Mike held various software development and architect roles at AT&T, Lucent and Bell Labs Research. Mike holds a bachelor’s and a master’s degree in Computer Science from Rutgers University.

Quiq is the Conversational Customer Experience Platform for the world’s top brands.

Learn more at Quiq.com

Go Back to All Articles. Have a story idea? Submit to [email protected].

Want to get this publication in your inbox? Subscribe here!

The Rise of Multimodal Customer Experience: Are We Moving Too Fast?Omnichannel was promised as the solution to a fragmented customer journey. While it delivered in many ways a new paradigm is taking shape, one defined by multimodal experiences powered by AI, automation, and real-time context. Customers can now move fluidly between voice, chat, video, and digital channels, often without a visible transition. For some, this represents the ideal journey. For others, it can feel as though the human element of customer care is slipping away. As organizations race to innovate, many are unintentionally creating gaps, not just between channels, but between themselves and key segments of their customer base. With varying levels of digital fluency and generational differences, and varying expectations, a one-size-fits-all approach to CX no longer scales. So, the question becomes: In our pursuit of the future, are we leaving parts of our customer base behind? In this candid and forward-looking discussion, CX leaders will explore:

|

CX Livewire: Consumer Voices, Real-Time ReactionsCustomer expectations are constantly evolving, and understanding how consumers perceive service, support channels, and emerging technologies is critical for shaping effective CX strategies. In this fast-paced and interactive session, panelists will explore key insights from Execs In The Know’s latest research findings, capturing the perspectives and expectations of CX leaders and consumers. Throughout the discussion, panelists will react to both the research findings and live polling of the CRS audience, creating a dynamic comparison between what consumers say they want and how organizations are currently approaching service delivery. These real-time insights will allow attendees to benchmark their own thinking against the room, while panelists share practical perspectives from inside their organizations on how they interpret, and respond to, shifting consumer expectations. Expect candid reactions, engaging audience participation, and thought-provoking contrasts between consumer sentiment and operational reality. This high-energy session is designed to spark conversation, challenge assumptions, and highlight where CX leaders may need to adapt in order to meet the evolving demands of their customers. |

Agent-Facing AI for CX: Through the Eyes of the AgentFor decades, contact center agents have been expected to act as human search engines navigating complex knowledge bases, policy documents, and fragmented systems to find the right answer for customers. But the emergence of agent-facing AI is beginning to shift that paradigm. Instead of simply retrieving information, modern AI tools can now interpret context, surface relevant guidance, and recommend next-best actions in real time. This panel will explore how CX leaders are deploying AI to transform the agent role, and what this experience is like from the agent’s perspective. Panelists will discuss how tools such as AI copilots, real-time knowledge synthesis, contextual assistance, automated summarization, and predictive assistance are helping agents navigate complex conversations more effectively while reducing cognitive load. At the same time, organizations must carefully balance automation with human judgment, ensuring agents remain empowered decision-makers. Panelists will also address the operational and cultural challenges of introducing AI into the agent workflow including trust, training, governance, and change management. Attendees will hear practical insights (and hopefully firsthand feedback from agents) on what’s working, what’s not, and how agent-facing AI can simultaneously improve efficiency, enhance employee experience, and deliver better outcomes for customers. |

The Next Gen CX Business Plan: Preparing for the Next 3–5 YearsFor years, organizations have piloted AI-powered support, automation, proactive service models, and intelligent self-service. Now, the industry is reaching an inflection point: what happens when these capabilities mature into the standard operating model? The question for leaders is no longer if these technologies work, but how to architect a business plan that thrives once they are fully integrated. Moving from pilot to scale requires a fundamental shift in how we lead. It demands a roadmap for workforce evolution, a commitment to data integrity, and a new definition of “success” that balances efficiency with the human connection customer still crave. What does workforce strategy look like when AI handles a significant portion of interactions? How do roles evolve? What investments must be made now in data quality, governance, and systems integration to support intelligent, proactive service? How is success measured? How do organizations deliver the trust, clarity, and the confidence that define Customer Assurance? In this discussion, CX leaders will explore:

|

Customer Assurance: A Leadership Decision, Not a DepartmentCustomer Assurance is not a department or a checklist. It is the confidence customers feel when they know a company will show up with clarity, competence, and care. It is built through leadership decisions that shape how the organization communicates, operates, and responds when something matters most. In an era defined by automation, AI, and no-reply emails, customers are tired of simply being processed. They are asking deeper questions: Do I feel safe doing business with you? Do I trust this experience? Do I believe this company will take care of me when it counts? True assurance is what turns a transaction into trust. It requires more than strong service design. It takes leadership alignment, clear decision-making, and systems that make confidence possible at every stage of the customer journey. That includes how expectations are set, how issues are owned, how employees are empowered, and how technology is used to support rather than distance the customer relationship. In this discussion, CX leaders will explore:

|