A Different Kind of AI Conversation

Let me be honest with you: I’ve been to enough industry conferences to know when a room is just going through the motions. At CRS 2026 in Amelia Island, something was different.

When I stepped on stage to lead “When AI Acts: Leading Through the Shift from Copilot to Agent,” I wasn’t there to talk about tools or trends, or which model is in market right now. I was there to have the harder conversation; the one about whether our organizations are actually ready for what’s coming.

Because here’s the reality: AI isn’t waiting for us to be ready. It’s already acting.

“By 2028, agentic AI is expected to handle 68% of customer experience interactions with technology partners.” — Cisco Global Research, 2025 (7,950 decision-makers across 30 countries)

Sarah Jeanneault presenting at CRS 2026, Amelia Island

The Question That Shifted the Room

I opened with a simple question: “When AI starts acting on behalf of your team – who is accountable?”

You could feel it land. Not because it’s new, but because most of us haven’t answered it yet. And the gap between how fast AI is advancing and how prepared our operations are to support it? That’s the gap I’ve been thinking about every single day.

As long as AI is a chatbot or a copilot, humans are the final decision-makers. The moment AI becomes agentic – resolving transactions, routing cases, applying policy logic without waiting for someone to click approve, the responsibility structure changes entirely. AI becomes embedded in your operations, and your operations had better be ready to hold it.

“93% of IT leaders report intentions to deploy autonomous AI agents within two years, and nearly half have already started.” — MuleSoft 2025 Connectivity Benchmark Report (1,050 IT leaders surveyed)

→ Source: MuleSoft / Salesforce Connectivity Benchmark Report, 2025

From Suggesting to Executing

Here’s the progression I walked through, and it’s one that most organizations are living in real time right now:

Stage 1 – AI as Responder: Chatbots that react to prompts, deflect tickets, answer straightforward questions. Reactive. Limited.

Stage 2 – AI as Assistant: Copilots that sit alongside your agents, surfacing information and suggesting next steps. Humans still make the call.

Stage 3 – AI as Actor: Agentic systems that execute. They process requests, make decisions within defined parameters, and move work across systems — independently.

Most organizations I talk to are managing all three simultaneously. And that third stage? That’s not just a technical upgrade. It’s a change in risk, accountability, and operational design.

“23% of organizations are already scaling AI agents — yet operational governance remains the primary barrier to broader deployment.” — McKinsey State of AI, 2025 (1,993 respondents across 105 countries)

The Foundation Beneath the Intelligence

This is where the conversation really shifted. Because we quickly moved past “is your AI smart enough?” to ask a much harder question: is the foundation beneath your AI structured enough?

Most organizations still rely on document-based knowledge: policies written in long-form PDFs, procedures scattered across shared drives, tribal knowledge living in the heads of your most experienced team members. For years, that’s been fine because humans compensate for ambiguity. We fill in the gaps, ask clarifying questions, escalate when we’re not sure.

AI doesn’t do that. AI executes based on the structure it’s given. If your knowledge is fragmented, outdated, or unclear, those weaknesses don’t disappear when AI touches them. They get amplified.

Attendees engage in the Care Gap exercise

Naming the Care Gap

Midway through the session, we stopped theorizing and got practical. I put people into small groups and gave them three questions:

- Where does customer care break in your organization?

- What is the measurable business impact?

- Why does that gap exist structurally?

Each group had to distill their answers into a single Care Gap Statement: the symptom, the impact, and the root cause.

What struck me was how fast people could do it. They didn’t have to think hard about where things break. Inconsistent responses driving repeat contacts. Escalations triggered by unclear decision thresholds. Copilot suggestions overridden because agents don’t trust the logic underneath them. Extended handle times because knowledge lives in four different places and no one can find what they need fast enough.

These aren’t new problems. What changed was the framing. We stopped calling them performance issues and started calling them what they actually are: structural gaps.

“Service reps spend 66% of their time on non-customer-facing tasks. AI implementation is directly tied to reducing that friction — but only when the underlying knowledge is structured.” — Salesforce AI Agent Research, 2024

Turning Breakdowns Into Opportunities

Once you name the gap, you can reframe it. I asked every group to complete this sentence:

“If we improved ___, we could achieve ___, without compromising customer care.”

The most compelling responses weren’t about acquiring better AI. They were about designing better systems. Improving decision logic clarity to reduce override rates. Centralizing and version-controlling workflows to lower escalations. Clarifying exception paths to improve first-call resolution. The emphasis wasn’t the tool; it was the architecture underneath the tool.

A Real-World Example of Structural Change

I shared a story from a large health insurance contact center that illustrates exactly what I mean. Agents were handling complex benefit and claims inquiries, and escalation rates were high. Leadership assumed it was a training problem.

It wasn’t. The knowledge existed. It was just structured in a way that nobody could use confidently under pressure. Policies lived across documents. Decision paths weren’t mapped. So, agents defaulted to escalation not because they didn’t know the answer, but because they couldn’t find it fast enough to trust it.

The organization rebuilt its processes into clear, visual workflows with defined decision logic and governance controls. The policies didn’t change. The structure did. Escalations dropped significantly. First-call resolution improved.

The transformation wasn’t technological. It was architectural.

The Maturity Journey

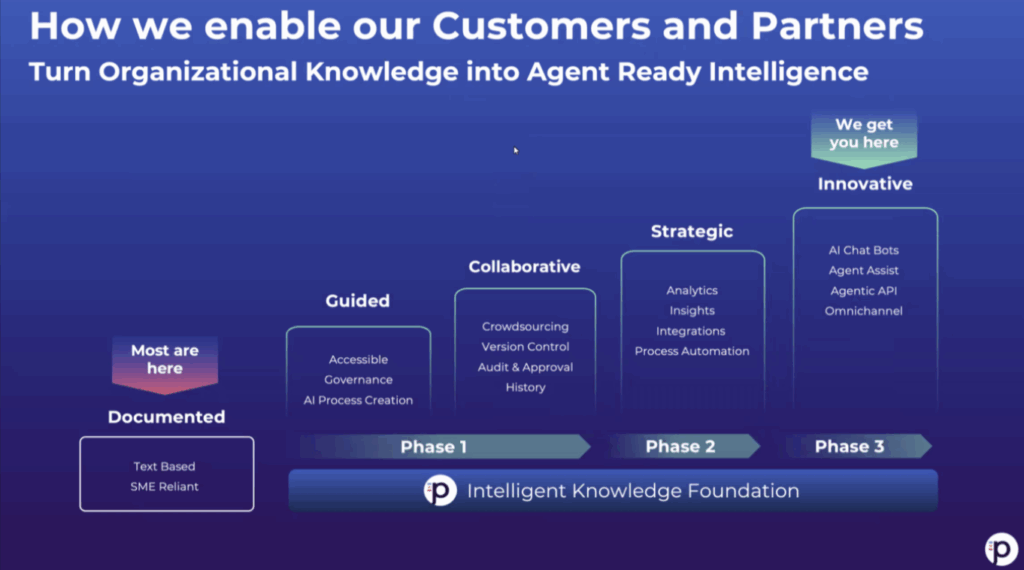

Toward the end of the session, I mapped out the maturity journey that resonated most with the room:

Documented: Information exists but remains static and interpretive. You’re dependent on SMEs to fill the gaps.

Guided: Processes are structured step-by-step. People can follow them without context.

Collaborative: Governance and version control are formalized. Knowledge is owned, not assumed.

Strategic: Knowledge is connected to analytics and continuous improvement.

Innovative: AI acts autonomously within defined guardrails. This is where the magic happens but only if the earlier stages are solid.

The sequence matters. I see organizations every week trying to leap directly to “Innovative” because of competitive pressure or boardroom enthusiasm. But automating ambiguity doesn’t eliminate it, it multiplies it. Scaling inconsistency doesn’t fix it, it accelerates it. Delegating judgment to AI without defining the rules introduces risk, not efficiency.

“Gartner predicts agentic AI will autonomously resolve 80% of common customer service issues without human intervention by 2029 — a 30% reduction in operational costs.” — Gartner, March 2025

What the Room Carried with Them

As we wrapped, conversations kept going at the tables. Leaders were asking each other: Who currently owns knowledge updates in your organization? Is your decision logic formally documented or just assumed? If AI executed tomorrow based entirely on how your systems are structured today, would you feel confident?

That last question is the one I want to leave you with too. Not because the answer is supposed to be “yes” right now most of us aren’t there yet. But because the question itself is the leadership shift this moment demands.

The Real Takeaway

AI will keep advancing. Its role in customer experience will keep expanding. The differentiator won’t be who adopts it first.

It will be who builds the strongest operational clarity beneath it.

When AI suggests, humans can adjust. When AI acts, structure determines trust.

That’s the shift. And it extends far beyond technology.

By Sarah Jeanneault, VP of Marketing, Procedureflow

About the Author

Sarah Jeanneault is VP of Marketing at Procedureflow, where she leads growth strategy and customer-centric programs. With 20+ years of experience across startups and enterprises, she focuses on the intersection of operational clarity and AI-readiness in customer experience. She spoke at Customer Response Summit 2026 in Amelia Island.

TELUS Digital

TELUS Digital ibex delivers innovative BPO, smart digital marketing, online acquisition technology, and end-to-end customer engagement solutions to help companies acquire, engage and retain customers. ibex leverages its diverse global team and industry-leading technology, including its AI-powered ibex Wave iX solutions suite, to drive superior CX for top brands across retail, e-commerce, healthcare, fintech, utilities and logistics.

ibex delivers innovative BPO, smart digital marketing, online acquisition technology, and end-to-end customer engagement solutions to help companies acquire, engage and retain customers. ibex leverages its diverse global team and industry-leading technology, including its AI-powered ibex Wave iX solutions suite, to drive superior CX for top brands across retail, e-commerce, healthcare, fintech, utilities and logistics.

Trista Miller

Trista Miller